Saw a recent Twitter poll from Casey Brown on the topic of figure scripting vs. "Illustrator magic", the former of which is the practice of writing a program to completely generate the figure vs. putting figures into Illustrator to make things look the way you like. Some folks really like programming it all, while I've argued that I don't think this is very efficient, and so arguments go back on forth on Twitter about it. Thing is, I think ALL of us having this discussion here are already way in the right hand tail in terms of trying to be tidy about our computational work, while many (most?) folks out there haven't ever really thought about this at all and could potentially benefit from a discussion of what an organized computational analysis would look like in practice. So anyway, here's what we do, along with some discussion of why and what the tradeoffs are (including talking about figure scripting.

First off, what is the goal? Here, I'm talking about how one might organize a computational analysis in finalized form for a paper (will touch on exploratory analysis later). In my mind, the goal is to have a well-organized, well-documented, readable and, most importantly, complete and consistent record of the computational analysis, from raw data to plots. This has a number of benefits: 1. it is more likely to be free of mistakes; 2. it is easier for others (including within the lab) to understand and reproduce the details of your analysis; 3. it is more likely to be free of mistakes. Did I mention more likely to be free of mistakes? Will talk about that more in a coming post, but that's been the driving force for me as the analyses that we do in the lab become more and more complex.

[If you want to skip the details and get more to the principles behind them, please skip down a bit.]

Okay, so what we've settled on in lab is to have a folder structured like this (version controlled or Dropboxed, whatever):

I'll focus on the "paper" folder, which is ultimately what most people care about. The first thing is "extractionScripts". This contains scripts that pull out numbers from data and store them for further plot-making. Let me take this through the example of image data in the lab. We have a large software toolset called rajlabimagetools that we use for analyzing raw data (and that has it's own whole set of design choices for reproducibility, but that's a story for another day). That stores, alongside the raw data, analysis files that contain things like spot counts and cell outlines and thresholds and so forth. The extraction scripts pull data from those analysis files and puts it into .csv files, which are stored in extractedData. For an analogy with sequencing, this is like maybe taking some form of RNA-seq data and setting up a table of TPM values in a .csv file. Or whatever, you get the point. plotScripts then contains all the actual plotting scripts. These load the .csv files and run whatever to make graphical elements (like a series of histograms or whatever) and stores them in the graphs folder. finalFigures then contains the Illustrator files in which we compile the individual graphs into figures. Along with each figure (like Fig1.ai), we have a Fig1readme.txt that describes exactly what .eps or .pdf files from the graphs folders ended up in, say, Figure 1f (and, ideally, what script). Thus, everything is traceable back from the figure all the way to raw data. Note: within the extractionScripts is a file called "extractAll.m" and in plotScripts "plotAll.R" or something like that. These master scripts basically pull all the data and make all the graphs, and we rerun these completely from scratch right before submission to make sure nothing changed. Incidentally, of course, each of the folders often has a massive number of subfolders and so forth, but you get the idea.

What are the tradeoffs that led us to this workflow? First off, why did we separate things out this way? Back when I was a postdoc (yes, I've been doing various forms of this since 2007 or so), I tried to just arrange things by having a folder per figure. This seemed logical at the time, and has the benefit that the output of the scripts are in close proximity to the script itself (and the figure), but the problem was that figures kept getting endlessly rearranged and remixed, leading to endless tedious (and error-prone) rescripting to regain consistency. So now we just pull in graphical elements as needed. This makes things a bit tricky, since for any particular graph it's not immediately obvious what made that graph, but it's usually not too hard to figure out with some simple searching for filenames (and some verbose naming conventions).

The other thing is why have the extraction scripts separated from the plots? Well, in practice, the raw data is just too huge to distribute easily this way, and if it was all mushed together with the code and intermediates, it would be hard to distribute. But, at least in our case, the more important fact is that most people don't really care about the raw data. They trust that we've probably done that part right, and what they're most interested are the tables of extracted data. So this way, in the paper folder, we've documented how we pulled out the data along while keeping the focus on what most people will be most interested in.

[End of nitty gritty here.]

And then, of course, figure scripting, the topic that brought this whole thing up in the first place. A few thoughts. I get that in principle, scripting is great, because it provides complete documentation, and also because it potentially cuts down on errors. In practice, I think it's hard to efficiently make great figures this way, so we've chosen perhaps a slightly more tedious and error prone but flexible way to make our figures. We use scripts to generate PDFs or EPSs of all relevant graphical elements, typically not spending time to optimize even things like font size and so forth (mostly because all of those have to change so many times in the end anyway). Yes, there is a cost here in terms of redoing things if you end up changing the analysis or plot. Claus Wilke argued that this discourages people from redoing plots, which I think has some truth to it. At the same time, I think that the big problem with figure scripting is that it discourages graphical innovation and encourages people to use lazy defaults that usually suffer from bad design principles—indeed, I would argue it's way too much work currently to make truly good graphics programmatically. Take this example:

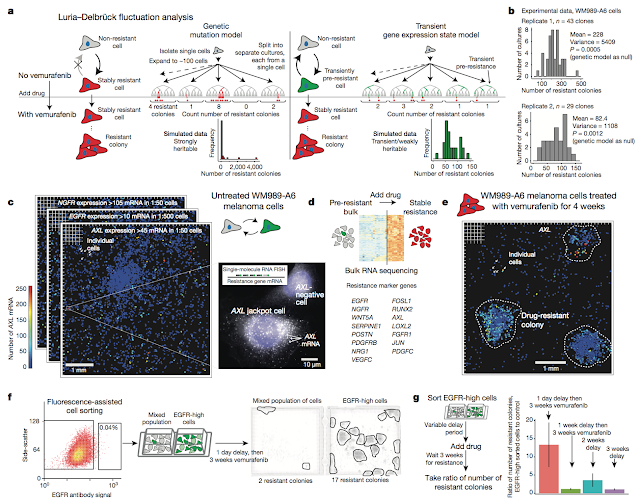

Or imagine writing a script for this one:

Maybe you like or don't like these type of figures, but either way, not only would it take FOREVER to write up a script for these (at least for me), but by the time you've done it, you would probably never build up the courage to remix these figures the dozen or so times we've reworked this one over the course of publication. It's just faster, easier, and more intuitive to do with a tool for, you know, playing with graphical elements, which I think encourages innovation. Also, many forms of labeling of graphs that reduce cognitive burden (like putting text descriptors directly next to the line or histogram that they label) are much easier in Illustrator and much harder to do programmatically, so again, this works best for us. It does also, however, introduce a human element for error, and that has happened to us, although I should say that programmatic figures are a typo away from errors as well, and that's happened, too. There is also the option to link figures, and we have done that with images in the past, but in the end, relying on Illustrator to find and maintain links as files get copied around just ended up being too much of a headache.

Note that this is how we organize final figures, but what about exploratory data analysis? In our lab, that ends up being a bit more ad-hoc, although some of the same principles apply. Following the full strictures for everything can get tedious and inhibitory, but one of the main things we try and encourage in the lab is keeping a computational lab notebook. This is like an experimental lab notebook, but, uhh, for computation. Like "I did this, hoped to see this, here's the graph, didn't work." This has been, in practice, a huge win for us, because it's a lot easier to understand human descriptions of a workflow than try and read code, especially after a long time and double especially for newcomers to the lab. Note: I do not think version control and commit messages serve this purpose, because version control is trying to solve a fundamentally different problem than exploratory analysis. Anyway, talked about this computational lab notebook thing before, should write something more about it sometime.

One final point: like I said, one of the main benefits to these sorts of workflows is that they help minimize mistakes. That said, mistakes are going to happen. There is no system that is foolproof, and ultimately, the results will only be as trustworthy as the practitioner is careful. More on that in another post as well.

Anyway, very interested in what other people's workflows look like. Almost certainly many ways to skin the cat, and curious what the tradeoffs are.

I have had to, on occasion, perform data archeology on other people's work. If any of it was well organized as the folder structure in your screenshot, which is emminently clear at first glance, I would be thrilled to pieces. Thank you for spreading these ideas.

ReplyDeleteEverything in this article rings true to my experience, especially the split between programmatic figure creation and final by-hand edits. A few small suggestions that may be useful:

- If an Illustrator license is too expensive, generating figures as SVG and editing them in Inkscape (open source, free) works ok. Microsoft Office can even import SVG files now.

- The downside of editing figures manually is that the vector drawing software will, on occasion, corrupt the file. Complex software, sadly, always has bugs. So even when using version control, I prefer to do figure edits in Dropbox (or equivalent) so I have minute-by-minute change rollback. It's safer, especially close to a deadline. I copy key revisions in the main project folder, whatever that may be; for me, it's a git repo.

I firmly endorse the idea that all work, computational or otherwise, should be journaled. My model is to, every day [1], record: what I intended to achieve, what I actually did, and what I thought about the results at the time, including relevant figures and links to data. I have found this to be indispensible even when I'm the only person who reads the result. Including key figures in the journal means that I can use it in place of slide decks in group meetings, which saves a lot of time. Writing about a problem also helps me think about it systematically, make better decisions, and retrospectively review whether I already considered and (wrongly or rightly) rejected an alternative plan [2].

I agree that commit messages cannot fulfill the objectives of a journal entry. Commit messages are short text snippets describing the intent of the associated change set, which for a commit is generally a the smallest unit of work that can be independently verified or composed. Commit messages are inseparable from the commit. Journal entries are standalone multimedia documents that tell a story across many tasks/commits, can be annotated at a later date, and can be redistributed and repurposed. Despite this, I still find it convenient to keep journal entries, data, and code in the same version controlled repository so that each journal entry is associated with a specific project state. This also allows me to edit journal entries without violating the principle that lab notebooks are immutable. But the fundamental point is that I agree that computational/everything else journals, regardless of how they are implemented, are extremely useful.

[1] A task that is continguous over multiple days, broken up only by the need to sleep or eat, may only get one long entry. A task that is truly 100% explained by a commit message and its associated change set (e.g., "proofread ___ R01"), doesn't get a journal entry.

[2] Someone who is more of an auditory learner might be better served by incorporating a voice recorder, voice transcription, or maybe even video.

This sounds like a very sensible workflow, thanks for sharing it!

Delete